3 Ways to Perform a Worst-Case Stack Analysis

Figuring out how to size the stack for an embedded application and the tasks within it can be challenging. In many cases, developers will pick a value that they feel should be enough. These estimates are sometimes a little short, most of the time a gross estimation and rarely spot on. While I always encourage developers to monitor their stack usage throughout their development cycle, there are times when a developer should be performing a worst-case stack analysis such as when:

- They are running dangerously low on RAM

- Need to commit a new code version

- They are finalizing the firmware for production

In this post, I will discuss three different ways that a develop can perform a worst-case stack analysis.

Technique #1 – Calculating by hand

In the days of old, embedded software developers used to have to hand calculate what their stack usage was going to be which can be tricky business. In order to calculate stack usage by hand, developers needed to know:

- How many function calls deep they were going to go

- The local variables that would be stored on the stack in each of those functions

- The size of the return address that will be stored on the stack

- The size of the local variables that will stored on the stack

- How big an interrupt frame will be if an interrupt occurs during execution

- The number of nested interrupts that could occur

As you can imagine, finding all these values and continuing to track them if changes are made can be quite time consuming and error prone. That’s why this method is no longer recommended but it’s still useful to attempt once to gain a deeper appreciation of what the other techniques are doing.

Technique #2 – Use a static code analyzer

Many static code analyzers can be used to estimate what the worst-case stack usage will be. During the code analysis, the tool will determine the function depth along with many of the items that we listed earlier. The nice thing about using a static analyzer is that it isn’t just performing a stack analysis but also checking for potential issues with the code. It runs in a matter of seconds which saves a developer from needing to hand calculate the stack usage.

While using a static code analyzer to get your worst-case stack usage is a good way to go, there are several potential issues that developers need to watch out for. These include:

- Dereferencing a function pointer is not counted as a function call

- Interrupt frames are not considered

It’s important to understand how your tool handles these items. In order to get an accurate result, one must often conditionally compile the function pointers out into function calls during the static code analysis using a macro or compiler symbol. You’ll also have to then add in what you believe the interrupt stack usage will be. Minor issues but important to understand in any analysis.

Technique #3 – Test and measurement

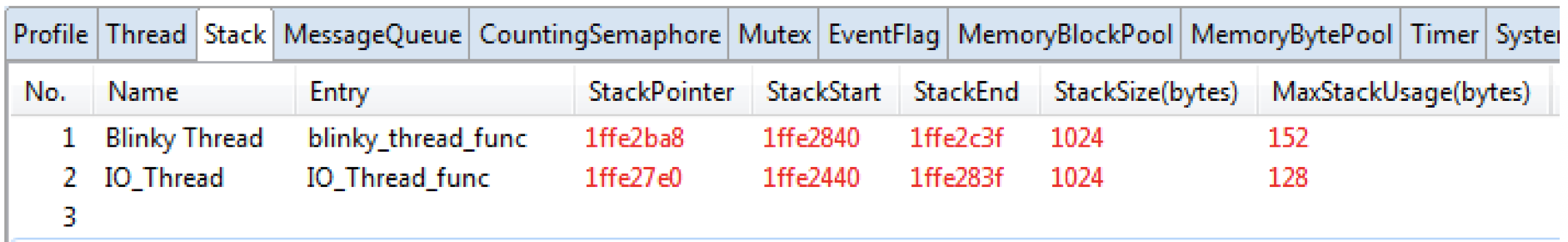

The technique that I often advocate most for worst-case stack analysis is to test and measure the system. Many development environments now have tools to perform OS-Aware debugging which will monitor the RTOS performance closely including the maximum stack usage while the application was running. A great example can be seen in the image below from within e2 Studio for the Renesas Synergy Platform which uses ThreadX.

As you can see, each thread (task) is listed along with the memory location, stack pointer and the maximum stack usage. We can even see how much memory is allocated to the stack. This provides developers not just a great way to keep tabs on their stack usage but also determine what their maximum stack usage is.

Developers do need to be careful with the maximum value presented to them. It’s important that the readings be taken while their system is under the greatest stress. For an RTOS based application, the interrupt frames are often stored on the system stack so that we don’t need to be concerned with sizing each thread to have enough memory for an interrupt storm.

Conclusions

No matter which technique you use to determine your stack usage, it’s still a good practice to oversize the stack a bit. It’s possible that during testing the worst-case was never achieved which could then setup the system for a stack overflow when the system is in the field.

In this article we have examined several techniques that we can use to calculate our worst-case stack usage. We’ve touched on just a few highlights. There are also several additional methods developers can use such as using tools like Percepio Tracealyzer or uC Probe to monitor the stack memory directly. There are even methods that rely on calculations that are performed by the compiler and linker. For today these are beyond our scope but if you are interested in these methods, I recommend reading this article. As we have seen, it’s very easy using modern tools to monitor the stack usage through-out the development cycle. It’s highly recommended that as developers write their software, they monitor and adjust their stack sizes accordingly for the most efficient system possible.

Struggling to keep your development skills up to date or facing outdated processes that slow down your team, raise costs, and impact product quality?

Here are 4 ways I can help you:

- Embedded Software Academy: Enhance your skills, streamline your processes, and elevate your architecture. Join my academy for on-demand, hands-on workshops and cutting-edge development resources designed to transform your career and keep you ahead of the curve.

- Consulting Services: Get personalized, expert guidance to streamline your development processes, boost efficiency, and achieve your project goals faster. Partner with us to unlock your team's full potential and drive innovation, ensuring your projects success.

- Team Training and Development: Empower your team with the latest best practices in embedded software. Our expert-led training sessions will equip your team with the skills and knowledge to excel, innovate, and drive your projects to success.

- Customized Design Solutions: Get design and development assistance to enhance efficiency, ensure robust testing, and streamline your development pipeline, driving your projects success.

Take action today to upgrade your skills, optimize your team, and achieve success.

Nice article, however a bit off-the-point.

Manual calculation cannot be done due to complexity, besides you need to check the assembly code generated by the compiler/optimizer to see the actual stack usage.

Measuring the stack usage in general does not provide the worst-case stack usage, it just provides the stack usage for the worst case that was tested, which may be far away from the absolute maximum. The latter, howevr, is likely to happen during a product’s lifetime but not likely to be hit by a test case.

So the only way to realy determine the worst case is the tooling based approach. However, if you have a complex software system, such an analysis may take easily more than a week, due to all the annotations you’ve got to do. If you are using a lot of function pointers, this is a lot of work. Some tools do not operate on the source code but on the binary, which is actually to be preferred since only this way you get the real thing analyzed (tools do not always interpret #ifdefs correctly), but then you also need to look into the disassembly when annotating function pointers. So the effort can be really high, the process still error prone, and only if you do really good work you get reliable worst case numbers.

So 2 approaches deliver guesstimates and the probability for the 3rd approach delivering exact numbers decreases with the project size increasing.

ok

very funny

Thanks for the comments. Manual calculation can indeed be done. I’ve done it and have had clients who have also done it with good results. Just because something is complex does not mean that it cannot be done. When I first started in this field more than 15 years ago the only way to perform a worst case stack analysis was in fact to do it manually. Very few tools, if any, could give an answer back then.

For the rest of your comments, I believe those were covered in the article. Worst case for a stack analysis is always “worst case”. There is no guarantee even with the most sophisticated tool that you will get an accurate result. This is why you look at your results, your methodology and then provide some buffer for the production unit.

You can also use the MPU and several other methods to test your “worst” case in order to ensure that it is appropriate for the application.