Tips and Tricks – Sleep on Exit

One of the software architectures for a low power system is to keep the system in a sleep mode at all times and only wake-up to run a single interrupt service routine (ISR) and then immediately go back to sleep. If the developer is trying to squeeze every last mAh out of his battery, there is one serious and often over looked flaw. There is a lot of wasted time and clock cycles to just run an interrupt.

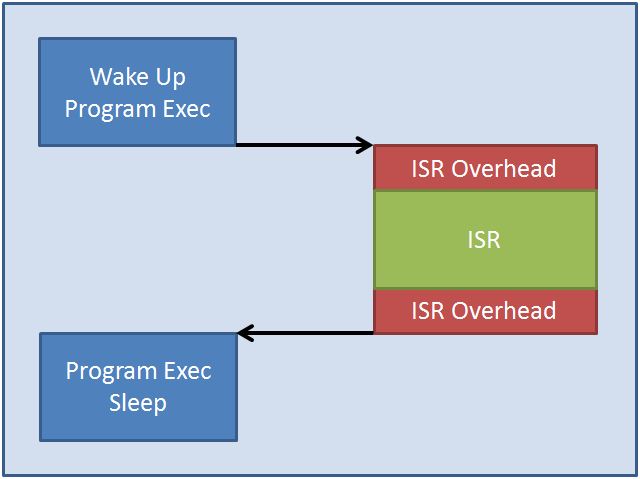

The sequence to execute an interrupt is a multiple step process. First, register values and other current state information is pushed onto the stack to be restored later. Next, the CPU vectors to the ISR, executes the ISR code and then finally pops the stack and restores the registers to their original state. This entire sequence can be summarized by the flowchart in Figure 1.

Figure 1 – Standard ISR Operation

Figure 1 – Standard ISR Operation

The problem with this is that even with modern, fast processors, there is still the inefficiency of pushing all the registers to the stack and then recovering them afterwards. This may take a very small amount of time, maybe even nanoseconds but over the course of millions or billions of executions this could amount to a considerable amount of time that could have been spent in a low power mode. The result is that battery power is wasted!

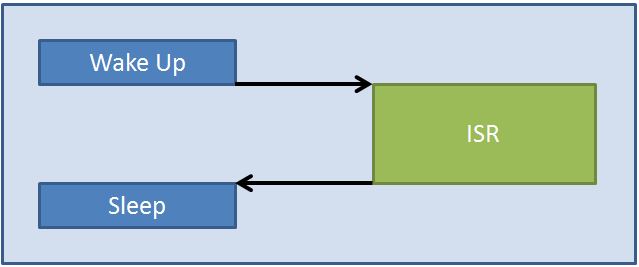

There is a really cool feature in most ARM microcontrollers known as Sleep on Exit. What this feature does is rather than allowing the processor to enter and exit the ISR every time and waste the overhead of playing with stack, enabling this feature causes the MCU to immediately go to sleep when the ISR is complete. By doing this, the MCU is still configured to run the ISR so when it fires again, the system wake up and immediately executes the ISR with minimal overhead. The result can be seen in Figure 2.

Figure 2 – ISR Overhead with Sleep On Exit

Figure 2 – ISR Overhead with Sleep On Exit

The real savings of this feature though is evident in applications where other low power design techniques aren’t used or where there isn’t time in the design cycle to optimize for energy. If it is built into the software architecture and one of the first optimizations there could be a few mA of current saved. However, if this feature is implemented at the end when most of the optimization has been implemented then most likely there will be a very minimal amount of savings.

Struggling to keep your development skills up to date or facing outdated processes that slow down your team, raise costs, and impact product quality?

Here are 4 ways I can help you:

- Embedded Software Academy: Enhance your skills, streamline your processes, and elevate your architecture. Join my academy for on-demand, hands-on workshops and cutting-edge development resources designed to transform your career and keep you ahead of the curve.

- Consulting Services: Get personalized, expert guidance to streamline your development processes, boost efficiency, and achieve your project goals faster. Partner with us to unlock your team's full potential and drive innovation, ensuring your projects success.

- Team Training and Development: Empower your team with the latest best practices in embedded software. Our expert-led training sessions will equip your team with the skills and knowledge to excel, innovate, and drive your projects to success.

- Customized Design Solutions: Get design and development assistance to enhance efficiency, ensure robust testing, and streamline your development pipeline, driving your projects success.

Take action today to upgrade your skills, optimize your team, and achieve success.