Defining the Meaning of Software Quality

It’s not uncommon for a software library, vendor or team to claim that they develop quality software. The problem with this proclamation is that the definition for quality can be interpreted quite differently between different developers and teams. One team may consider any code base that meets MISRA-C to be a quality code base while another team may only care that every functions cyclomatic complexity be ten or less. Others may simply run a few test cases and claim that their software is bug free and therefore quality software. Since everyone has their own definition for quality, it’s imperative that teams define quality in a way that is not just documented but also measurable. In this post, we will explore several measurable software metrics that can be used to define software quality.

Adhering to Industry Best Practices (Standards)

The embedded systems industry is full of standards that are intended to help developers avoid the pitfalls and pains of the developers that came before them. These standards can vary slightly in their focus from simple stylistic standards to standards like MISRA-C that offer developers a subset of the C language to follow. Developing software to a standard guarantee that common pitfalls with be avoided and will elevate the quality of the software.

When it comes to verifying a coding standard, there are usually two components that are required; automatic tool analysis and code reviews. For example, MISRA-C is a very common coding standard that developers follow. A static code analyzer can be used to verify probably 90% of the directives in the standard but there are some that just can’t be verified by a tool. In order to ensure that the standard is met, developers need to perform periodic code reviews and manually check the remaining directives. Meeting a standard can be a great way to ensure that a minimum level of quality is met within the software.

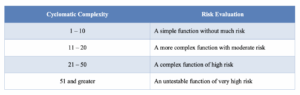

Minimizing Cyclomatic Complexity

One of my favorite quality measurements that I believe every development team should monitor and add to their definition for quality is the McCabe Cyclomatic Complexity measurement. This is a simple measurement that can be performed on any function that determines the number of paths through the function. Functions with a value of ten are less are considered to be simple functions that are easily tested and maintained. Beyond ten though, the number of paths required for testing starts to become more complex, difficult and error prone to develop and maintain which can be used to show that the code is lower quality. In fact, as the complexity number approaches numbers greater than 20, it becomes nearly impossible to correctly test the functions. If you can’t test a function properly, how can you show that it works correctly?

Measuring complexity can also be an automated process. There are free tools available such as CCCC and the Eclipse matriculator plugin that can be used to measure cyclomatic complexity. There are also IDE’s such as Understand that can be used to gather metric information about a code base. The key to being successful with measuring this metric is to first decide to make the measure and second to perform the measurement before checking new code into a code base. It should also be performed on a continuous integration server as well.

Compilation with No Warnings

There are so many software libraries and code bases that I encounter that don’t compile without warnings. Warnings are the compilers way to tell a developer that they are doing something that doesn’t quite seem right. Given that most compilers will let developers do some horrendous things in code, the fact that the compiler is calling a developer out with a warning means that the developer should be paying attention! Code that compiles without a single warning is an easy to measure metric that shows the software is meeting a quality level that may not be met by other pieces of software. It’s still possible there could be bugs or other quality issues but at least the code itself is semantically correct.

Code Testing Coverage

I would argue that one of the greatest deficiencies I see in our industries development cycle are developing tests that get 100% code coverage. In fact, the problem isn’t 100% code coverage, it’s just understanding how much of the code the tests are actually covering! It would be one thing if a team knew they were covering 85% of their software with their test cases but most teams don’t even know that. Code coverage can be a great metric to track to show the level of software quality. Obvious something that has been tested to 85% will be more robust and higher quality than something that was tested to only 50%. Developers can measure this value and use it as an internal metric for code quality. We’ll look at how to do this in a future post.

Code Verification

Code verification is similar to test coverage except that instead of measuring how much code is covered, we are measuring what percentage of tests are actually passing or failing. For example, we can generate a numeric value based on the following factors:

- The test being executed

- Passing the test

- Requirements coverage

Using these metrics, we could generate a numeric value in the range of 0 – 10 based on how successful the tests are executed. This then provides us with a metric to review and if we have not met the desired code verification level we can go back and improve the testing process until we achieve the desired level which also corresponds to a desired code quality level.

Conclusions

In order to have quality software, the word quality needs to be defined by the development team. That definition should include measurable metrics that can be easily tracked and monitored through-out the entire development process. In this article, we’ve explored some high-level definitions that should be the minimal metrics and processes that are followed in order to create a quality code base. Implementing these metrics will help you to not just lift general code quality but also eliminate and prevents software defects.

Struggling to keep your development skills up to date or facing outdated processes that slow down your team, raise costs, and impact product quality?

Here are 4 ways I can help you:

- Embedded Software Academy: Enhance your skills, streamline your processes, and elevate your architecture. Join my academy for on-demand, hands-on workshops and cutting-edge development resources designed to transform your career and keep you ahead of the curve.

- Consulting Services: Get personalized, expert guidance to streamline your development processes, boost efficiency, and achieve your project goals faster. Partner with us to unlock your team's full potential and drive innovation, ensuring your projects success.

- Team Training and Development: Empower your team with the latest best practices in embedded software. Our expert-led training sessions will equip your team with the skills and knowledge to excel, innovate, and drive your projects to success.

- Customized Design Solutions: Get design and development assistance to enhance efficiency, ensure robust testing, and streamline your development pipeline, driving your projects success.

Take action today to upgrade your skills, optimize your team, and achieve success.