Getting the most performance from printf

The oldest, tried and true debugging technique is to sprinkle printf statements throughout embedded software in the hope that an insight to the system behavior will be gleaned. Using printf is not always advisable and can unforeseen real-time implications for the system. Let’s examine the fundamental issues with printf and then a few techniques that can be used to get the most performance from it.

Understanding the issues with printf

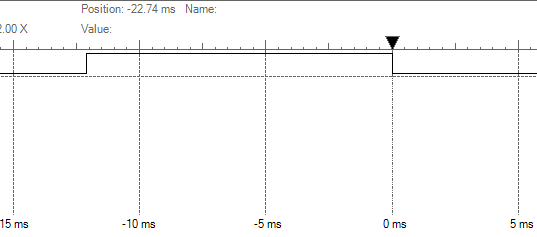

Using printf comes with a few problems that are often overlooked by developers. The first, a developer must bring in a standard C library that will undoubtedly increase ROM and RAM usage. Second, every time a printf stamen is used, the system becomes blocked until all characters have been transmitted which can result in significant real-time performance degradation. Take for example, a simple string to output such as “Hello World!” being printed out a UART at 9600 (still a very common occurrence). I performed a simple timing measurement on an STM32 and as shown in Figure 1, it took 12.5 milliseconds for the string to be formatted and printed to the terminal.

Figure 1 – Printing “Hello World!”

Adding any string formatting makes the situation even worse! Printing the system state to the terminal using printf(“The system state is %d”, State) results in a 21 millisecond application delay as the string is formatted and transmitted. One might argue that running at 9600 baud is ridiculous but even increasing to 115200 would still result in 1.05 and 1.75 milliseconds respectively to transmit these two messages. A lot of processor bandwidth and potential real-time performance hits for minimally useful information.

Performance Technique #1 – Create a non-blocking printf

Every printf version that I have ever encountered in the wild has been the blocking type. Once a call to printf is made the application stops execution until every character has been successfully transmitted. Amazingly inefficient! An alternative, is to create a non-blocking version. A non-blocking printf version will

- format the string

- stuff the formatted string into a transmit buffer

- initiate the transmission for the first character

- let an interrupt service routine handle the remaining characters in the transmit buffer

- continue executing code.

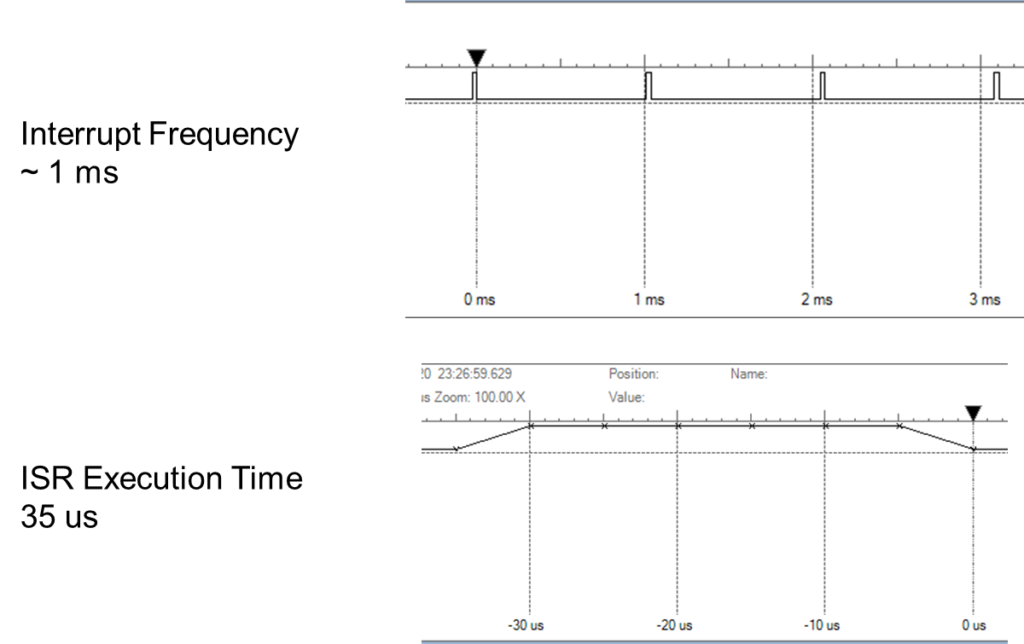

The big hit for a non-blocking printf is the setup time which on the STM32 at 9600 baud I found to vary between 0.8 and 1.8 milliseconds. After the initial setup time, a transmit interrupt occurs approximately every one millisecond and requires 35 microseconds to stuff the next character into the UART transmit register before getting back to doing useful work. Figure 2 shows the periodic interrupt and also the interrupt execution time. Keep in mind that the execution time does not include the interrupt overhead which is less than 25 clock cycles in this case.

Figure 2 – Non-blocking printf performance

Performance Technique #2 – Increase the baud rate

My mind is blown that so many developers still will default their UART to 9600. Serial hardware today can handle baud rates of 1 Mbps or better! Occasionally, I’ll encounter someone bold enough to set the baud rate at 115200. Unless there is a potential electrical or hardware related issue with running up the clock, there is nothing wrong with setting the baud to 1 Mbps and getting debug messages out as fast as possible in order to minimize real-time performance issues. The original blocking printf for “Hello World!” would only block for 120 microseconds. Far more acceptable than 12.5 milliseconds.

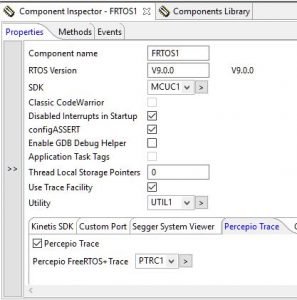

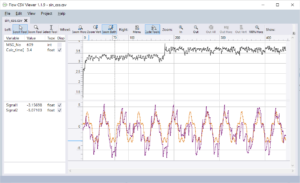

Performance Technique #3 – Use SWD

Modern day microcontrollers have the printf performance issues in mind when they developed the silicon. For example, developers that take advantage of the debugging capabilities of the ARM Cortex-M parts can skip the UART and use the internal debug module to transmit printf messages back through the debugger to the IDE. Skipping the UART in this manner saves not only setup but the internal hardware mechanism minimizes software overhead! An internal buffer is filled with the message and the debug hardware automatically handles transmission to the debug probe which results in minimal impact on the applications real-time performance.

Conclusion

Few developers are going to toss out their favorite, tried and true printf debugging techniques. In today’s modern microcontroller hardware, there exists multiple options for improving the performance and efficiency of printf that minimize impact on real-time performance. For developers looking to try these improvements themselves, I’ve put together a Keil project for the STM32 that demonstrate how to use these techniques. The project can be found here. (Examine uart_app.c for the most interesting code).

Struggling to keep your development skills up to date or facing outdated processes that slow down your team, raise costs, and impact product quality?

Here are 4 ways I can help you:

- Embedded Software Academy: Enhance your skills, streamline your processes, and elevate your architecture. Join my academy for on-demand, hands-on workshops and cutting-edge development resources designed to transform your career and keep you ahead of the curve.

- Consulting Services: Get personalized, expert guidance to streamline your development processes, boost efficiency, and achieve your project goals faster. Partner with us to unlock your team's full potential and drive innovation, ensuring your projects success.

- Team Training and Development: Empower your team with the latest best practices in embedded software. Our expert-led training sessions will equip your team with the skills and knowledge to excel, innovate, and drive your projects to success.

- Customized Design Solutions: Get design and development assistance to enhance efficiency, ensure robust testing, and streamline your development pipeline, driving your projects success.

Take action today to upgrade your skills, optimize your team, and achieve success.

There is another way to a non-blocking printf(). Usually printf() calls putchar(), so the bottleneck is here, besides the time printf() needs to do the formatting.

All you have to do is to overwrite putchar() with a version that writes into a ring buffer and let an associated interrupt routine do the actual transmission. So the library standard printf() may be used, and it avoids the workaround with sprintf() and a static print buffer.

This approach has been working for me since good old 8051 days.

Besides, printf() is not only good for debugging 🙂

— Hans-Peter